Are you familiar with A/B Testing (also called split testing or bucket testing)?

A/B Testing is like if you were in charge of a lab where new ideas are born and constantly tweaked.

With A/B Testing, you might be comparing two versions of a web page, email subject, social post or more against each other.

Both versions of your content will be randomly shown to visitors. With the help of statistical data, you will be able to identify which has better performance.

These experiments can be very rewarding.

It puts website optimization to the forefront of your strategy and quickly becomes a must for all affiliates willing to achieve greater results.

At first glance, changing the color of a Call-to-action (CTA) button from red to green seems trivial. But with A/B Testing, it can highlight the difference between a good Click-through rate (CTR) for your offer … and an amazing one.

The Many Advantages of A/B Testing

The beauty of A/B Testing is reflected in its use of measurable data.

You can then use this data to formulate hypotheses and have a better understanding of user behavior. It’s not hearsay or guessing anymore: you now have actual proof to work with!

A/B Testing lets you improve specific goals like CTR, email open rates and awareness (just to name a few).

Here are other advantages of using A/B Testing:

- It can be fast: create two templates or simply change one value between your two versions and you’re good to go.

- It’s simple yet advanced analysis: you’re only doing statistical comparisons between two versions, so you can choose to focus your attention on stuff like click heatmaps.

- It offers flexibility: you have the liberty to A/B test basically anything on a website, from images, to forms and fonts. Since you only have two versions to think about, making changes is quick and easy.

- It can lower your bounce rate: tweaking your website and offers just might be what’s needed to keep your visitors interested. The devil is in the details.

- It leads to Increased sales: this goes without saying. A/B Testing helps you pinpoint the best converting offers once you’ve done some retooling.

A/B Testing in 5 Steps

Here’s a blueprint we use at CrakRevenue each time A/B Testing is on the menu… which is often! In our 10+ years of experience online, this step-by-step approach is what worked out best for us.

Step 1: Measure

- Determine an objective you want to achieve.

- Determine Key Performance Indicators (KPI), % ROI or $ ROI + CTR

Step 2: Prioritize

- Prioritize the most important spots, i.e., those that cost the most and have the highest traffic volume. With lower traffic volume, you need to run your tests longer. Prioritizing costly/high-volume spots accelerates the optimization process.

Step 3: A/B Test

- Choose the banners or campaigns you wish to test according to the different spots.

- Ensure continuous monitoring by double-checking data.

Step 4: Optimize

- Learn from your data and adjust your aim. What nets you the best ROI across the board? Put more weight (%) into what makes money. On the flip side, lighten the load of less profitable tests.

- Tests allow you to gather qualitative data about the traffic. Then, you’re able to:

i) Segment your traffic into different sources (by Geo, Device, etc.)

ii) Focus on what your traffic likes to see, then add or delete banners/campaigns accordingly

Step 5: Repeat!

A/B Testing: Best Practices

Now that we have a better idea of what A/B Testing actually is, it’s time to learn how to make the most of it.

At CrakRevenue, we think that an effective test uses a wide range of banners and what we like to call safe investments—which is at least one top converting product and various PPL or PPS offers.

Further considerations...

- Let the test run for an adequate amount of time: if you stop your testing too soon, you might miss out on crucial data. On the contrary, if you let the test go on for too long, you might lose a lot of money. Our solution: adjust the test’s duration according to the traffic volume.

- A/B Testing is all about cohesion and control. You want precise data, which requires careful planning and execution.

- Learn from the numbers: it can be hard not to follow your gut, but you must resist going against empirical data. When in doubt, do further testing.

Real-Life Example of A/B Testing

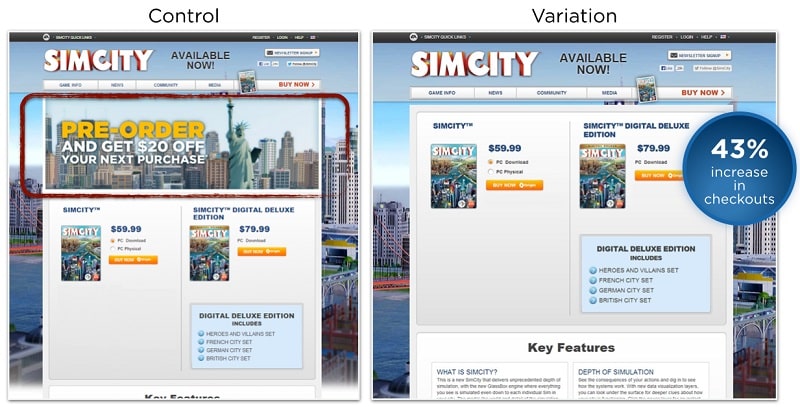

Back in 2013, Electronic Arts was able to significantly boost the sales of its video game SimCity 5 by running smart A/B Tests.

The publisher’s goal was simple: increase the number of purchases of its game after lower than expected preorders.

To achieve this, they worked with the product team at Maxis who hypothesized that moving the Call-to-Action would yield different — better — results.

So they started to test different variations of the landing page, moving the CTA around until they completely removed it.

A picture is worth a thousand words, right?

The variation without an offer resulted in 43% more purchases. Does that mean banners are dead? No! Only in this case, assumptions they drove sales were wrong.

Turns out, all people wanted was to purchase SimCity 5 without jumping through hoops.

With A/B Testing, you get real data about your campaigns and banners.

It’s up to you to use that data to your advantage.

What About A/B/n Testing?

Whereas A/B Testing focuses on a simple comparison (Version A and Version B), A/B/n Testing pits three or more variations of your content against each other.

The “n” represents any number (“Nth”) of different variations you want to test. For instance, Google Optimize allows up to 10 variations with its tool.

If you want to test on a much broader scope, then A/B/n Testing is optimal. You’ll be able to spot which pages convert better without the limits of A/B Testing.

On the flip side, you will have to divide your traffic to many different pages, which leads to prolonged tests before getting back significant data.

A/B/n Testing at Crak

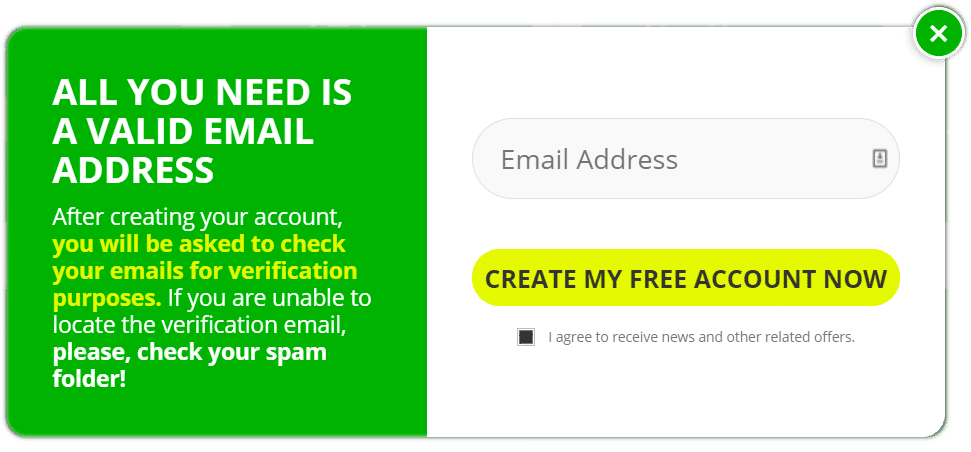

We thought you might be interested by a real experiment we’ve done here at CrakRevenue.

Last December, we tested 5 variants of a landing page to get the best results.

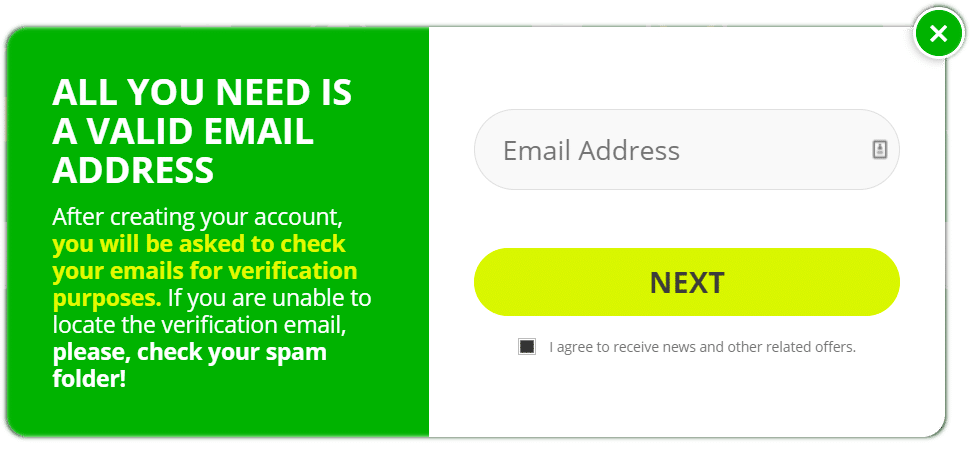

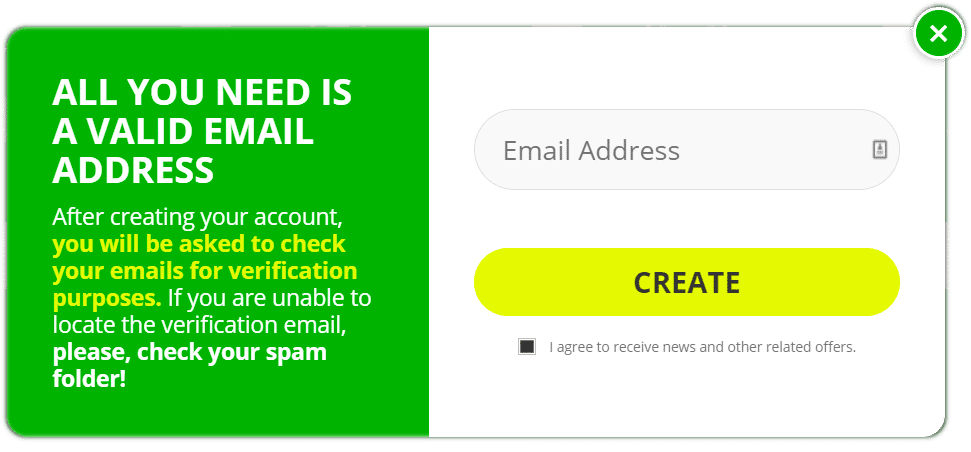

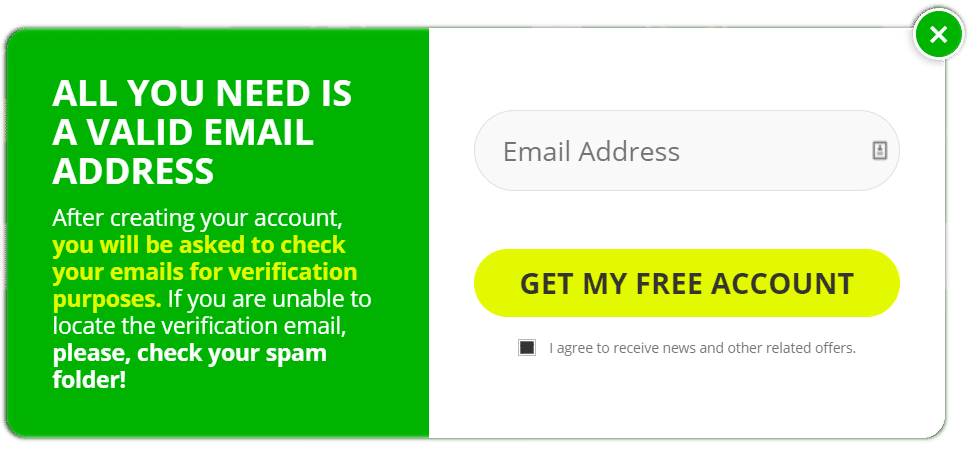

Take a look at the following images:

Our objective? Increase the CTR by using the best possible CTA.

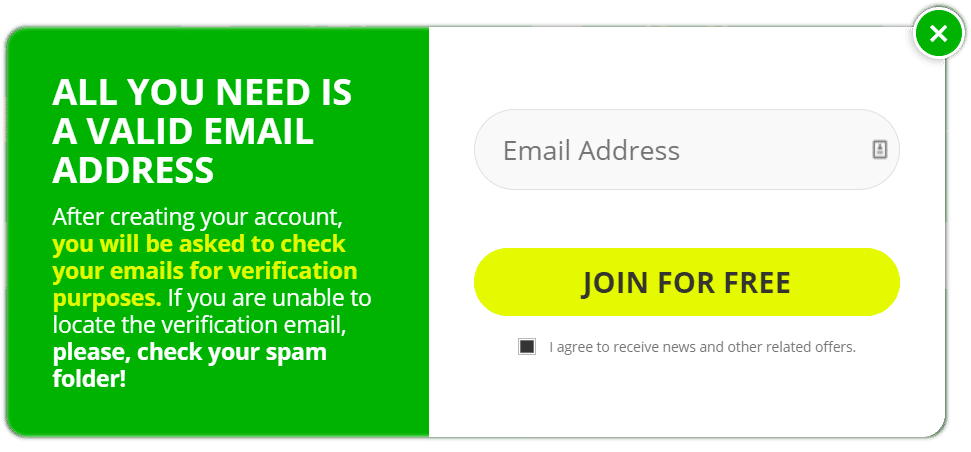

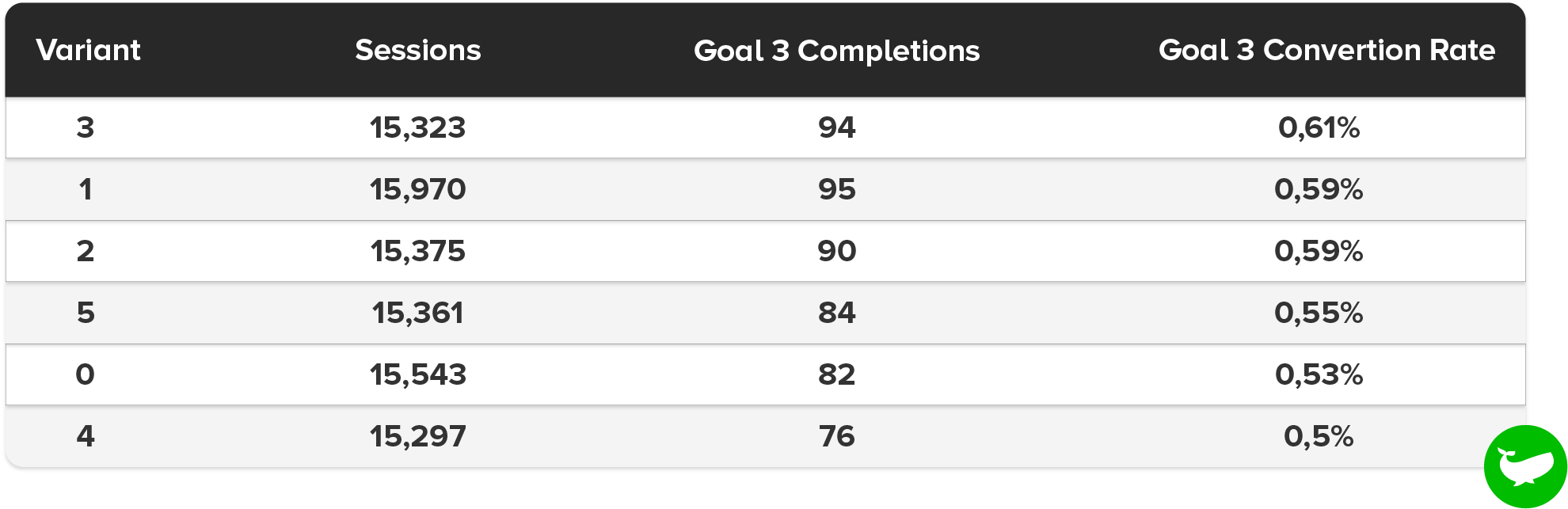

In the end, we managed to gather a lot of data… after all, we register 50 billion impressions per month across all our sites, giving us a lot of room to maneuver.

See for yourself:

Careful analysis of the qualitative data revealed that our “Next” landing page had the best conversion rate.

Sometimes, less is more in marketing. In this case, four simple letters yielded better results than explicit CTAs.

Further testing could tell us which button color (currently yellow) converts better. Marketers have been trying to find the answer for a very long time. The truth? It depends.

Context matters a lot in marketing. There IS a general rule of thumb: make your CTAs pop!

Split testing campaigns separates the boys from the men...

So we hope you’ll make good use of A/B Testing in the near future.

Have you ever tried this method before? We want to hear your stories in the comments below.

Thanks for reading!